Santa Clara, CA, USA - July 29, 2016 - Artificial intelligence is the future. Artificial intelligence is science fiction. Artificial intelligence is already part of our everyday lives. All those statements are true, it just depends on what flavor of AI you are referring to. For example, when Google DeepMind's AlphaGo program defeated South Korean Master Lee Se-dol in the board game Go earlier this year, the terms AI, machine learning, and deep learning were used in the media to describe how DeepMind won. And all three are part of the reason why AlphaGo trounced Lee Se-Dol. But they are not the same things.

|

NVIDIA DRIVE PX

Accelerating the Race to Self-Driving Cars.

NVIDIA DRIVE PX is the world's most advanced autonomous car platform - combining deep learning, sensor fusion, and surround vision to change the driving experience.

Photo courtesy of NVIDIA |

| |

Santa Clara, CA, USA - July 29, 2016

Through the ages, artists of all types have been creating beautiful, richly detailed oil paintings on canvas that have inspired us all.

But these artists likely never dreamed that one day they would be able to choose any brush they like, pick from a limitless array of paint colors, and use the same natural twists and turns of the brush to create the colorful texture of oil, all on a digital canvas.

That’s exactly what Project Wetbrush from Adobe Research does, along with the help of the heavy-duty computational power of NVIDIA GPUs and CUDA.

Technically speaking, Project Wetbrush is the world’s first real-time simulation-based 3D painting system with bristle-level interactions.

|

Project Wetbrush - Adobe, NVIDIA Collaborate on World’s First Real-Time 3D Oil Painting Simulator.

Photo courtesy of NVIDIA |

| |

The painting and drawing tools most of us have used are 2D.

They’re simple and they’re fun.

But Project Wetbrush is completely different.

This is a full 3D simulation, complete with multiple levels of thickness, depth and texture.

It feels real and it’s immersive.

Oil painting on an actual canvas is full of complex interactions within the paint, between the brush and the paint, and among the bristles themselves.

|

Project Wetbrush - Adobe, NVIDIA Collaborate on World’s First Real-Time 3D Oil Painting Simulator.

Photo courtesy of NVIDIA |

| |

Project Wetbrush simulates all this in real-time, including the complexity of maintaining paint viscosity, variable brush speeds, color mixing and even the drying of paint.

The bottom line is that it’s not easy to build a digital oil painting tool that lets artists paint so fluidly and naturally that they can ignore the technology and simply immerse themselves in their art.

Endeavor

After more than two decades pioneering visual computing, we're building a new home.

A smartly designed space that will bring teams together, pushing our performance, and supporting future growth.

|

After more than two decades pioneering visual computing, we're building a new home.

Our ambitions and focus are the inspiration for the project's working name, Endeavor.

Photo courtesy of NVIDIA |

| |

When completed in late 2017, the two-story building will span 500,000 square feet, accommodate 2,500 employees, and provide two levels of underground parking.

We'll have leveraged our own revolutionary technologies, like Iray, to craft a structure unlike any other in the world.

|

After more than two decades pioneering visual computing, we're building a new home.

Our ambitions and focus are the inspiration for the project's working name, Endeavor.

Photo at July 21, 2016.

Photo courtesy of NVIDIA |

| |

Its unique design - based on the triangle, the fundamental building block of computer graphics - will deliver functionality and efficiency in an open, sweeping environment.

|

| Courtesy of NVIDIA |

| |

Our ambitions and focus are the inspiration for the project's working name, Endeavor.

http://endeavor.nvidia.com/

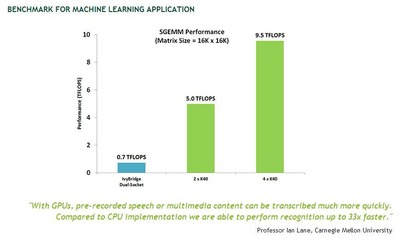

MACHINE LEARNING

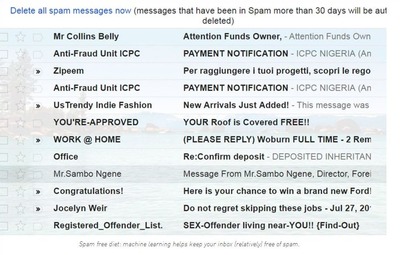

Data scientists in both industry and academia have been using GPUs for machine learning to make groundbreaking improvements across a variety of applications including image classification, video analytics, speech recognition and natural language processing.

In particular, Deep Learning – the use of sophisticated, multi-level “deep” neural networks to create systems that can perform feature detection from massive amounts of unlabeled training data – is an area that has been seeing significant investment and research.

https://developer.nvidia.com/deep-learning

Although machine learning has been around for decades, two relatively recent trends have sparked widespread use of machine learning: the availability of massive amounts of training data, and powerful and efficient parallel computing provided by GPU computing.

http://www.nvidia.com/object/what-is-gpu-computing.html

GPUs are used to train these deep neural networks using far larger training sets, in an order of magnitude less time, using far less datacenter infrastructure.

GPUs are also being used to run these trained machine learning models to do classification and prediction in the cloud, supporting far more data volume and throughput with less power and infrastructure.

|

| Courtesy of NVIDIA |

| |

Early adopters of GPU accelerators for machine learning include many of the largest web and social media companies, along with top tier research institutions in data science and machine learning.

With thousands of computational cores and 10-100x application throughput compared to CPUs alone, GPUs have become the processor of choice for processing big data for data scientists.

NVIDIA GPUs - The Engine of Deep Learning

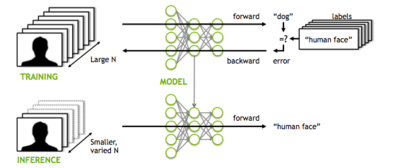

Traditional machine learning uses handwritten feature extraction and modality-specific machine learning algorithms to label images or recognize voices.

However, this method has several drawbacks in both time-to-solution and accuracy.

Today’s advanced deep neural networks use algorithms, big data, and the computational power of the GPU to change this dynamic.

Machines are now able to learn at a speed, accuracy, and scale that are driving true artificial intelligence.

https://blogs.nvidia.com/blog/2016/01/12/accelerating-ai-artificial-intelligence-gpus/

Deep learning is used in the research community and in industry to help solve many big data problems such as computer vision, speech recognition, and natural language processing.

|

| Courtesy of NVIDIA |

| |

Practical examples include:

• Vehicle, pedestrian and landmark identification for driver assistance

• Image recognition

• Speech recognition and translation

• Natural language processing

• Life sciences

The NVIDIA Deep Learning SDK provides high-performance tools and libraries to power innovative GPU-accelerated machine learning applications in the cloud, data centers, workstations, and embedded platforms

https://developer.nvidia.com/deep-learning-software

What’s the Difference Between Artificial Intelligence, Machine Learning, and Deep Learning?

This is the first of a multi-part series explaining the fundamentals of deep learning by long-time tech journalist Michael Copeland.

Posted on July 29, 2016

by Michael Copeland

Artificial intelligence is the future.

Artificial intelligence is science fiction.

Artificial intelligence is already part of our everyday lives.

All those statements are true, it just depends on what flavor of AI you are referring to.

For example, when Google DeepMind’s AlphaGo program defeated South Korean Master Lee Se-dol in the board game Go earlier this year, the terms AI, machine learning, and deep learning were used in the media to describe how DeepMind won.

And all three are part of the reason why AlphaGo trounced Lee Se-Dol.

But they are not the same things.

|

| Courtesy of NVIDIA |

| |

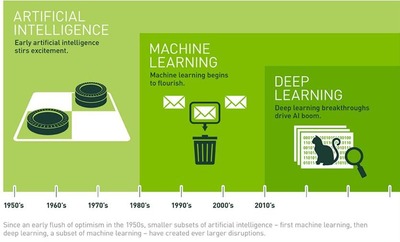

The easiest way to think of their relationship is to visualize them as concentric circles with AI — the idea that came first — the largest, then machine learning — which blossomed later, and finally deep learning — which is driving today’s AI explosion — fitting inside both.

From Bust to Boom

AI has been part of our imaginations and simmering in research labs since a handful of computer scientists rallied around the term at the Dartmouth Conferences in 1956 and birthed the field of AI.

|

| Courtesy of NVIDIA |

| |

In the decades since, AI has alternately been heralded as the key to our civilization’s brightest future, and tossed on technology’s trash heap as a harebrained notion of over-reaching propellerheads.

Frankly, until 2012, it was a bit of both.

Over the past few years AI has exploded, and especially since 2015.

Much of that has to do with the wide availability of GPUs that make parallel processing ever faster, cheaper, and more powerful.

It also has to do with the simultaneous one-two punch of practically infinite storage and a flood of data of every stripe (that whole Big Data movement) – images, text, transactions, mapping data, you name it.

Let’s walk through how computer scientists have moved from something of a bust — until 2012 — to a boom that has unleashed applications used by hundreds of millions of people every day.

Artificial Intelligence — Human Intelligence Exhibited by Machines

Back in that summer of ’56 conference the dream of those AI pioneers was to construct complex machines — enabled by emerging computers — that possessed the same characteristics of human intelligence.

|

| Courtesy of NVIDIA |

| |

This is the concept we think of as “General AI” — fabulous machines that have all our senses (maybe even more), all our reason, and think just like we do.

You’ve seen these machines endlessly in movies as friend — C-3PO — and foe — The Terminator.

General AI machines have remained in the movies and science fiction novels for good reason; we can’t pull it off, at least not yet.

What we can do falls into the concept of “Narrow AI.”

Technologies that are able to perform specific tasks as well as, or better than, we humans can.

Examples of narrow AI are things such as image classification on a service like Pinterest and face recognition on Facebook.

Those are examples of Narrow AI in practice.

These technologies exhibit some facets of human intelligence.

But how?

Where does that intelligence come from?

That get us to the next circle, Machine Learning.

Machine Learning — An Approach to Achieve Artificial Intelligence

Machine Learning at its most basic is the practice of using algorithms to parse data, learn from it, and then make a determination or prediction about something in the world.

http://www.nvidia.com/object/machine-learning.html

So rather than hand-coding software routines with a specific set of instructions to accomplish a particular task, the machine is “trained” using large amounts of data and algorithms that give it the ability to learn how to perform the task.

Machine learning came directly from minds of the early AI crowd, and the algorithmic approaches over the years included decision tree learning, inductive logic programming, clustering, reinforcement learning, and Bayesian networks among others.

As we know, none achieved the ultimate goal of General AI, and even Narrow AI was mostly out of reach with early machine learning approaches.

|

| Courtesy of NVIDIA |

| |

As it turned out, one of the very best application areas for machine learning for many years was computer vision, though it still required a great deal of hand-coding to get the job done.

http://www.nvidia.com/object/imaging_comp_vision.html

People would go in and write hand-coded classifiers like edge detection filters so the program could identify where an object started and stopped; shape detection to determine if it had eight sides; a classifier to recognize the letters “S-T-O-P.”

From all those hand-coded classifiers they would develop algorithms to make sense of the image and “learn” to determine whether it was a stop sign.

Good, but not mind-bendingly great.

Especially on a foggy day when the sign isn’t perfectly visible, or a tree obscures part of it.

There’s a reason computer vision and image detection didn’t come close to rivaling humans until very recently, it was too brittle and too prone to error.

Time, and the right learning algorithms made all the difference.

Deep Learning — A Technique for Implementing Machine Learning

Another algorithmic approach from the early machine-learning crowd, Artificial Neural Networks, came and mostly went over the decades.

Neural Networks are inspired by our understanding of the biology of our brains – all those interconnections between the neurons.

But, unlike a biological brain where any neuron can connect to any other neuron within a certain physical distance, these artificial neural networks have discrete layers, connections, and directions of data propagation.

|

| Courtesy of NVIDIA |

| |

You might, for example, take an image, chop it up into a bunch of tiles that are inputted into the first layer of the neural network.

In the first layer individual neurons, then passes the data to a second layer.

The second layer of neurons does its task, and so on, until the final layer and the final output is produced.

Each neuron assigns a weighting to its input — how correct or incorrect it is relative to the task being performed.

The final output is then determined by the total of those weightings.

So think of our stop sign example.

Attributes of a stop sign image are chopped up and “examined” by the neurons — its octogonal shape, its fire-engine red color, its distinctive letters, its traffic-sign size, and its motion or lack thereof.

The neural network’s task is to conclude whether this is a stop sign or not. It comes up with a “probability vector,” really a highly educated guess, based on the weighting. In our example the system might be 86% confident the image is a stop sign, 7% confident it’s a speed limit sign, and 5% it’s a kite stuck in a tree ,and so on — and the network architecture then tells the neural network whether it is right or not.

Even this example is getting ahead of itself, because until recently neural networks were all but shunned by the AI research community.

They had been around since the earliest days of AI, and had produced very little in the way of “intelligence.”

The problem was even the most basic neural networks were very computationally intensive, it just wasn’t a practical approach.

Still, a small heretical research group led by Geoffrey Hinton at the University of Toronto kept at it, finally parallelizing the algorithms for supercomputers to run and proving the concept, but it wasn’t until GPUs were deployed in the effort that the promise was realized.

http://www.nvidia.com/object/what-is-gpu-computing.html

If we go back again to our stop sign example, chances are very good that as the network is getting tuned or “trained” it’s coming up with wrong answers — a lot.

What it needs is training. It needs to see hundreds of thousands, even millions of images, until the weightings of the neuron inputs are tuned so precisely that it gets the answer right practically every time — fog or no fog, sun or rain.

It’s at that point that the neural network has taught itself what a stop sign looks like; or your mother’s face in the case of Facebook; or a cat, which is what Andrew Ng did in 2012 at Google.

Ng’s breakthrough was to take these neural networks, and essentially make them huge, increase the layers and the neurons, and then run massive amounts of data through the system to train it.

In Ng’s case it was images from 10 million YouTube videos.

Ng put the “deep” in deep learning, which describes all the layers in these neural networks.

Today, image recognition by machines trained via deep learning in some scenarios is better than humans, and that ranges from cats to identifying indicators for cancer in blood and tumors in MRI scans.

Google’s AlphaGo learned the game, and trained for its Go match — it tuned its neural network — by playing against itself over and over and over.

Thanks to Deep Learning, AI Has a Bright Future

Deep Learning has enabled many practical applications of Machine Learning and by extension the overall field of AI.

https://developer.nvidia.com/deep-learning

Deep Learning breaks down tasks in ways that makes all kinds of machine assists seem possible, even likely.

Driverless cars, better preventive healthcare, even better movie recommendations, are all here today or on the horizon.

http://www.nvidia.com/object/drive-px.html

AI is the present and the future.

With Deep Learning’s help, AI may even get to that science fiction state we’ve so long imagined.

You have a C-3PO, I’ll take it.

You can keep your Terminator.

Michael Copeland

Michael Copeland

A long-time journalist based in Silicon Valley, Michael has been in the thick of technological change since the web took hold.

|

Michael Copeland

Photo courtesy of NVIDIA |

| |

Writing and editing for such outlets as WIRED, Fortune, and Business 2.0, Michael has been a part of identifying and explaining some of the most monumental (and monumentally stupid) trends in technology from the time Netscape was a thing.

Most recently he helped lead editorial efforts as a partner at venture capital firm Andreessen Horowitz.

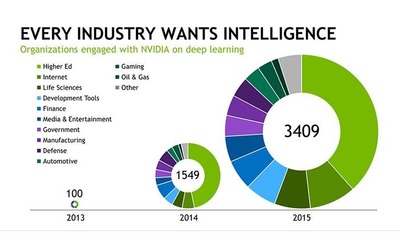

Every Industry Wants Intelligence

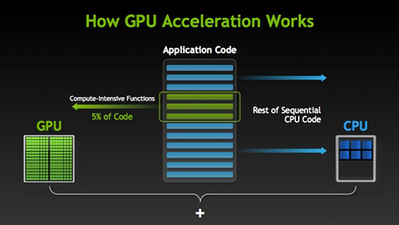

GPU-accelerated computing is the use of a graphics processing unit (GPU) together with a CPU to accelerate scientific, analytics, engineering, consumer, and enterprise applications.

Pioneered in 2007 by NVIDIA®, GPU accelerators now power energy-efficient datacenters in government labs, universities, enterprises, and small-and-medium businesses around the world.

|

| Courtesy of NVIDIA |

| |

GPUs are accelerating applications in platforms ranging from cars, to mobile phones and tablets, to drones and robots.

http://www.nvidia.com/object/what-is-gpu-computing.html

Developers want to create anywhere and deploy everywhere.

NVIDIA GPUs are available all over the world, from every PC OEM; in desktops, notebooks, servers, or supercomputers; and in the cloud from Amazon, IBM, and Microsoft.

All major AI development frameworks are NVIDIA GPU accelerated — from internet companies, to research, to startups.

|

| Courtesy of NVIDIA |

| |

No matter the AI development system preferred, it will be faster with GPU acceleration.

We have also created GPUs for just about every computing form-factor so that DNNs can power intelligent machines of all kinds.

GeForce is for PC.

Tesla is for cloud and supercomputers.

Jetson is for robots and drones.

And DRIVE PX is for cars.

All share the same architecture and accelerate deep learning.

Baidu, Google, Facebook, Microsoft were the first adopters of NVIDIA GPUs for deep learning.

This AI technology is how they respond to your spoken word, translate speech or text to another language, recognize and automatically tag images, and recommend newsfeeds, entertainment, and products that are tailored to what each of us likes and cares about.

Startups and established companies are now racing to use AI to create new products and services, or improve their operations.

In just two years, the number of companies NVIDIA collaborates with on deep learning has jumped nearly 35x to over 3,400 companies.

Industries such as healthcare, life sciences, energy, financial services, automotive, manufacturing, and entertainment will benefit by inferring insight from mountains of data.

And, with Facebook, Google, and Microsoft opening their deep-learning platforms for all to use, AI-powered applications will spread fast.

In light of this trend, Wired recently heralded the “rise of the GPU.”

The Development Platform for Autonomous Cars

Fully autonomous and driver assistance technologies powered by deep learning have become a key focus for every car manufacturer, as well as transportation services and technology companies.

|

| Photo courtesy of NVIDIA |

| |

The car needs to know exactly where it is, recognize the objects around it, and continuously calculate the optimal path for a safe driving experience.

This situational and contextual awareness of the car and its surroundings demands a powerful visual computing system that can merge data from cameras and other sensors, plus navigation sources, while also figuring out the safest path – all in real-time.

This autonomous driving platform is NVIDIA DRIVE PX.

|

| Photo courtesy of NVIDIA |

| |

NVIDIA DRIVE PX is the world's most advanced autonomous car platform - combining deep learning, sensor fusion, and surround vision to change the driving experience.

https://developer.nvidia.com/deep-learning-resources

|

| Courtesy of NVIDIA |

| |

Source: NVIDIA Corp.

http://nvidianews.nvidia.com/

ASTROMAN Magazine - 2016.07.23

World's Fastest Commercial Drone Powered by NVIDIA Jetson TX1

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=2091

ASTROMAN Magazine - 2016.07.23

A TITAN for a Titan: NVIDIA CEO Jen-Hsun Huang Presents New TITAN X to Baidu’s Andrew Ng

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=2090

ASTROMAN Magazine - 2014.04.21

New York International Auto Show Fortified with NVIDIA-Powered Audis, BMWs, Rolls-Royce

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=1700

ASTROMAN Magazine - 2013.10.19

NVIDIA Introduces G-SYNC Technology for Gaming Monitors

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=1574

ASTROMAN Magazine - 2013.08.10

Google, IBM, Mellanox, NVIDIA, Tyan Announce Development Group for Data Centers

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=1511

ASTROMAN Magazine - 2013.07.13

NVIDIA Tesla GPU Accelerators Power World's Most Energy Efficient Supercomputer

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=1497

ASTROMAN Magazine - 2012.08.12

NVIDIA Maximus Fuels Workstation Revolution With Kepler Architecture

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=1293

ASTROMAN Magazine - 2011.11.12

New GPU Applications Accelerate Search for More Effective Medicines and Higher Quality Materials

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=1099

ASTROMAN Magazine - 2011.08.07

NVIDIA Tesla GPUs Used by J.P. Morgan Run Risk Calculations in Minutes, Not Hours

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=1030

ASTROMAN Magazine - 2011.03.27

NVIDIA GeForce GTX 590 Is World's Fastest Graphics Card

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=930

ASTROMAN Magazine - 2011.01.16

Intel to Pay NVIDIA Technology Licensing Fees of $1.5 Billion

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=881

ASTROMAN Magazine - 2010.10.29

NVIDIA Tesla GPUs Power World's Fastest Supercomputer

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=831

ASTROMAN Magazine - 2010.10.10

New NVIDIA Quadro Professional Graphics Solutions

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=820

ASTROMAN Magazine – 2010.05.09

NVIDIA GPUs Provide Edge to 3D Animators Utilizing Autodesk Softimage 2011

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=716

ASTROMAN Magazine – 2010.02.27

NVIDIA Names University Of Maryland A CUDA Center Of Excellence

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=671

ASTROMAN Magazine – 2010.02.27

ADAM - Notion Ink Pixel Qi tablet with NVIDIA Tegra2 processor

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=670

ASTROMAN Magazine – 2010.02.27

Next Generation NVIDIA Tegra

http://www.astroman.com.pl/index.php?mod=magazine&a=read&id=669

Editor-in-Chief of ASTROMAN magazine: Roman Wojtala, Ph.D.

|